Let’s start with AI — and the increasingly concerning tendency to trust software that’s accountable to no one.

Think about a professional in a fleet or safety role. Your job is to weigh context: prevailing laws, company culture, internal policies, budgets, and your own experience built over time through education, networking, and collaboration.

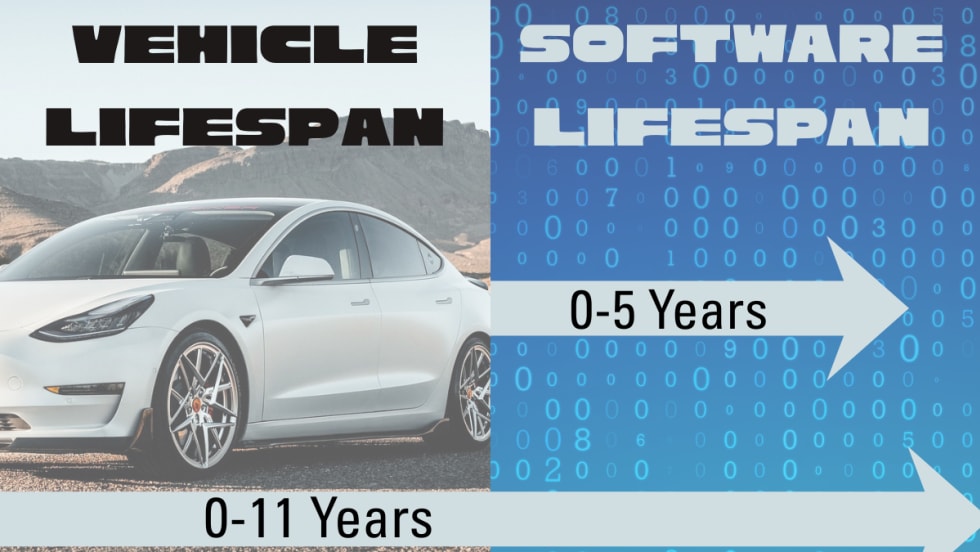

Now consider how technology has been steadily integrated to improve compliance with safety policies and fleet best practices. These efficiencies allow fewer experts to manage more vehicles and employees — and there’s nothing wrong with that. But there’s a limit to how much you should outsource to AI.

While it’s tempting to rely on AI agents to identify risks or inefficiencies, what you surrender in return is your critical thinking — and, more importantly, your organization’s accountability. Best practices in fleet and safety aren’t algorithms; they’re the result of people sharing experience, learning, and refining approaches over the years.

Those practices evolve through professionals who engage with associations such as NAFA, NETS, and AFLA, and through attending our own BBM events, such as the Fleet Forward Conference and Government Fleet Expo. As we embrace new AI-enabled tools, we must also stay grounded in real-world discussion and peer learning. That’s why in 2026, our Fleet Fast Podcast on Spotify will feature over 40 hours of sessions recorded at Fleet Forward Conference — so the human dialogue remains central.

Quick Pulse Check

As 2026 begins, budgets are set, and compromises loom. You have two choices:

Subscribe to software that uses AI to guide management decisions as it learns from your data, or

Hire an intern — invest in a person — and pass along your experience as you make decisions yourself.

You probably can’t afford to do both.

I’ll admit my bias: I love technology. I’ve helped organizations deploy data-driven systems that delivered remarkable safety and efficiency gains. But none of that relied on AI to make the decisions — it was skilled professionals interpreting data who produced the results. That said, in 2026, I’d pick an intern over an AI upgrade.

So, here’s the real question: if you had to choose between an AI platform that could replace your critical-thinking value, or a human you could mentor toward succession, both costing the same, which would you choose?

The Human Edge

Are you prepared to learn and retain knowledge — or only to ask prompts and accept whatever response comes back?

The less we practice retaining knowledge, the harder it becomes. You might feel efficient multitasking through prompts, but your software will always be the better multitasker. Each time you train AI by rewarding its responses, you’re also training your own replacement.

For now, your value lies in the knowledge you share — but within a year or two, if you don’t evolve, you may find yourself needing a completely new role.

In the short term, your company wins. In the long term, both you and your organization lose if mentorship and human judgment fade away.

As you weigh your choices — mentoring new people versus investing in more software — remember: You can’t technology your way into a safety culture. You can’t technology your way into efficiency growth. And you can’t technology your way into 2026.

It’s here, it’s real — and we’re in this together.